Top 5 ways voice automation is a game changer for Healthcare Insurance contact centers

Voice automation is a game changer for healthcare insurance contact centers, improving operational efficiency and customer...

AI can remind patients about appointments; see our demo: AI Calls Patient with Appointment Reminder + Rescheduling

Smarter, faster-to-deploy voicebots from Talkie.ai are now ready.

Our voicebot platform allows you to rapidly deploy business-ready virtual agents that patients can immediately begin interacting with in their preferred language.

Talkie.ai actively invests in the continuous improvement of our AI technology to be able to offer the best possible patient experiences.

We have recently released a new version of the Talkie.ai intent detection engine that further improves the already amazing voice AI capabilities Talkie.ai’s platform offers.

Intent detection is a crucial component of Natural Language Understanding (NLU) systems used in voicebots. It is responsible for steering the conversation in the right direction – responding to user questions and requests. In simple terms, intention is a specific action the user wants the bot to perform. However, users can articulate their requests in various ways, and there is no way to know beforehand all the combinations they can use. That’s why modern voicebots use intent detection models based on deep neural networks to categorize user utterances. Such systems learn the examples of user utterances provided to them and use those examples to classify incoming utterances into specific intentions.

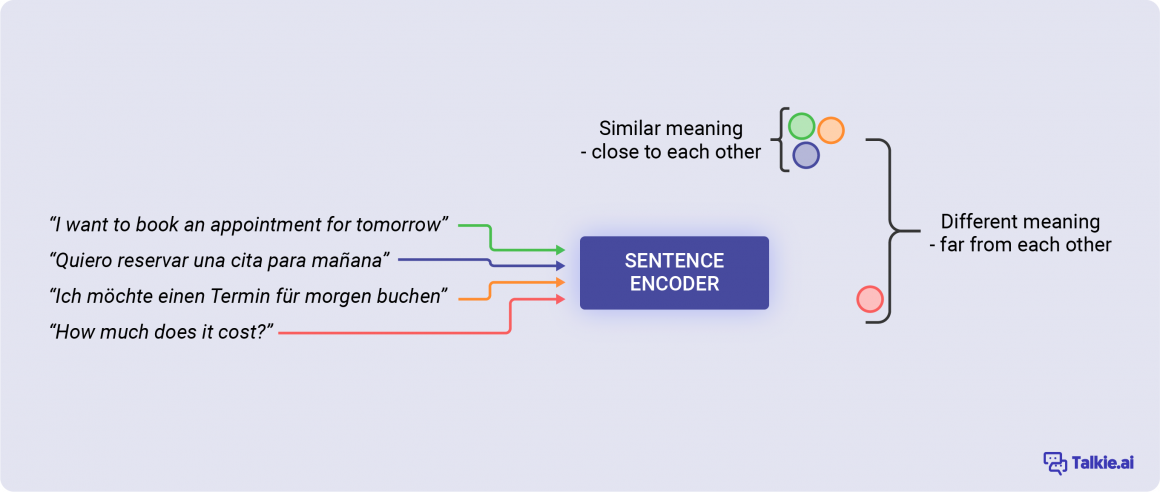

In our intent detection model we use a pre-trained sentence encoder.

In the pre-training phase the encoder analyzes millions of sentences with both similar and different meanings. It learns what particular words and in which order make the sentences semantically similar. This makes the encoder understand whether incoming utterances are similar or different from the ones in the training set without the need to explicitly teach the bot all the combinations.

For example if you use the sentence, “I want to book an appointment for tomorrow” as a training example of the “BOOK APPOINTMENT” intention and later the bot receives a call where the user says “Let’s schedule a visit for tomorrow” the bot will know to classify it as a “BOOK APPOINTMENT” intention. This is because from the pre-training it knows that “visit” is similar to “appointment” and “schedule” is similar to “book”, so there is no need for you to explicitly tell it to the bot. Pre-training voicebots saves a lot of time during the bot set up, reducing the number of examples you need to provide as a minimum and makes it more robust in the production environment.

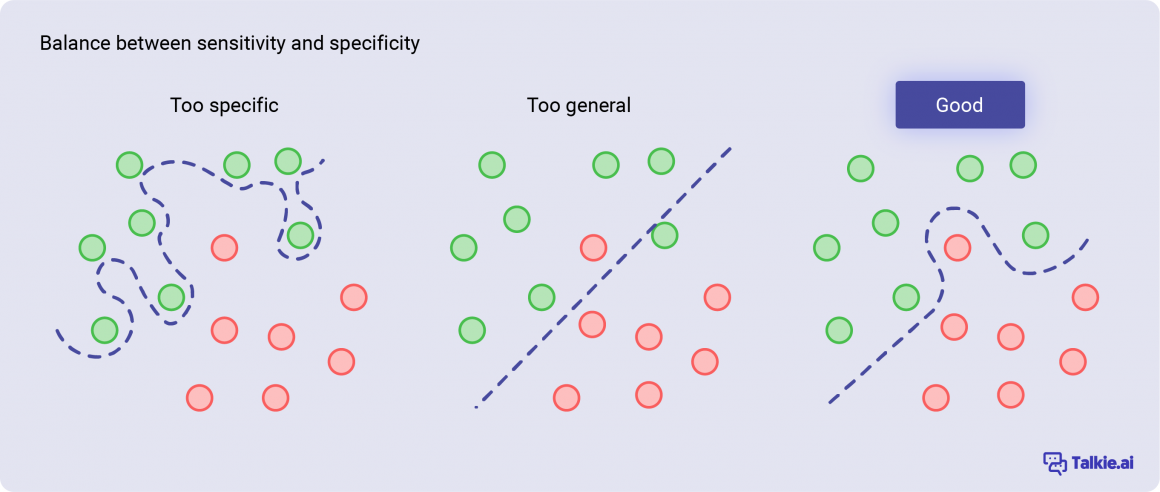

As for the classifier, we have tested hundreds of architectures to find the optimal balance between generalization and specificity. We employ several regularization techniques that allow us to use more complex classification algorithms while avoiding overfitting.

Implementing the above mentioned techniques allows us to reduce the error in detecting user intentions by up to 60% while still maintaining low latency and optimal performance of the system. Moreover, it introduced other features such as few-shot learning and improved support for multiple languages.

We are committed to offering our clients great customer support when getting new voicebots up and running for their business. In addition to providing a platform that requires little to no coding experience to use, this round of improvements to the Talkie.ai platform means we can now deploy voicebots for our clients faster than ever thanks to few shot learning.

The healthcare domain is highly specialized – the language used by users differs from the general one used in everyday conversations. This makes these datasets highly challenging for an intent detection system.

Additionally we also wanted to see how the model performs under extreme low data scenarios.

Below we show the performance of the model given the limited number of training examples.

You can see that the model is able to achieve 70% accuracy with only 5 training examples per intent, and with 30 examples or more it is able to achieve about 90% accuracy even in such challenging scenarios.

Our goal is for our voicebots to offer a patient experience that is comfortable, natural and reliably accurate. The improved intent detection for our voicebots moves us further towards offering unbeatable patient experiences on the Talkie.ai platform.

Learn more about intent detection and other terminology with our Ultimate Glossary of Conversational AI Terms.

Offering multilingual support with voicebots is a great way to support patient communities. Voice AI makes not only easier for businesses to offer multilingual support to patients but also more affordable than hiring multilingual teams.

In addition to being well suited for narrow domains and low database scenarios our model is also multilingual – it can understand user utterances in multiple languages.

Here are the languages currently available. This list will continue to grow.

Moreover the sentences similar in one language would also be similar across different languages – meaning you can have the training examples in English and the model will still predict the intent correctly if the user speaks in Spanish.

This makes our intent detection model especially useful for a mixed-language customer base. When a voicebot is ‘trained’ in one language, that learning is automatically propagated across the other supported languages. This means greater consistency across the languages but also another time saving feature when deploying the voicebots.

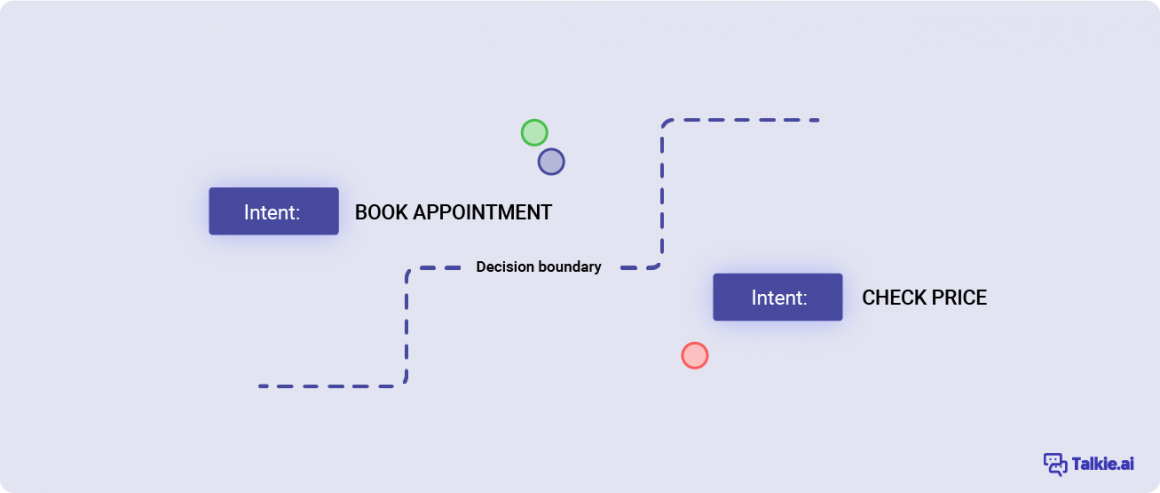

Traditionally intent detection machine learning models consist of 2 steps: encoder and classifier. The encoder’s role is to convert user utterances into their mathematical representations (so they can be compared) while preserving their semantic relationship. In other words, sentences that mean the same thing should be close to each other.

The better the encoder is, the better it can understand the similarity between the sentences. With a robust sentence encoder you only need to give the model a few examples of specific intentions that the users can express. Such an encoder will automatically place incoming utterances close to training utterances that have the same meaning.

The second part of the intent detection model is the classifier. It’s role is to learn specific decision boundaries i. e. where one intention ends and another begins. The more examples the classifier has the better it can depict what utterances you want to interpret a particular intention.

It is important for the classifier to generalize, too specific decision boundaries can lead to overfitting – a situation where the model captures the noise along with the underlying pattern in data and, although it can perfectly classify all the training examples, It won’t do well on the new, incoming data. A key to developing a good classifier is to find the right balance between sensitivity and specificity.

Aleksander Obuchowski is the AI Research Lead at Talkie.ai His interests include Natural Language Processing, Computer Vision, Explainable AI and AI for Good. He works on all aspect of the Natural Language Understanding systems in Talkie.ai. Aleksander contributed to the technical sections of this article.

Voice automation is a game changer for healthcare insurance contact centers, improving operational efficiency and customer...

Virtual agents can take care of the repeatable, administrative part of healthcare workers’ jobs. Here are the 4 most popular use...